Trio Diffusion (v2)

Autoregressive infinite image generation with diffusion models — ablations and findings

At a glance:

- Problem: Standard diffusion models generate fixed-size images. Can we grow the canvas indefinitely, one patch at a time?

- Approach: Each patch is generated by a diffusion model conditioned on three spatial neighbors (L-shape) and a frozen vision backbone (CLIP or DINOv2). Fully autoregressive, no fixed canvas size.

- What works: Locally convincing patches, successful color/texture transfer from seed images, learned position-dependent statistics (sky at top).

- What doesn’t: No coherent objects emerge across patches. Five backbone/position-encoding ablations all fail to produce cross-block structure.

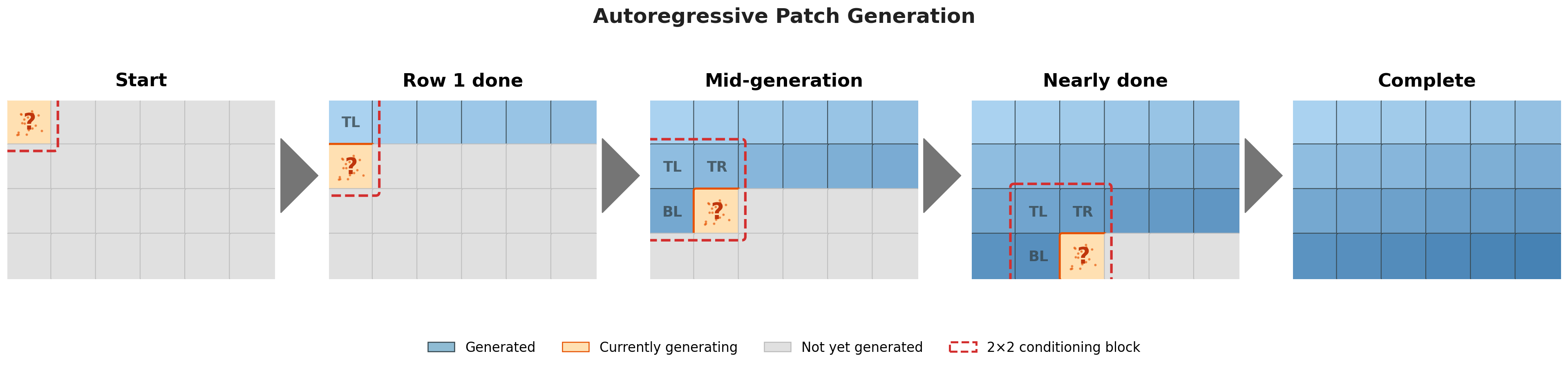

Standard diffusion models generate fixed-size images. This project takes a different approach: generate images patch by patch, autoregressively, where each new patch is conditioned on three spatial neighbors in an L-shape: top-left, top-right, bottom-left. The L-shape provides enough local context to generate coherently in any direction (right, down, or diagonally), so the canvas can grow without bound. Trained from scratch on 3,000–10,000 COCO images in pixel space. Builds on a previous version that had no global conditioning.

┌─────────────┬─────────────┐

│ TOP LEFT │ TOP RIGHT │ Context patches (known)

├─────────────┼─────────────┤

│ BOTTOM LEFT │ ???? │ Target patch (generated by diffusion)

└─────────────┴─────────────┘

Method

Spatial inpainting

Each generation step is a 2×2 inpainting problem. The three context patches and the noisy target are tiled into a single spatial block: 3 RGB channels plus a binary mask indicating which quadrants are known. Because the patches sit at their true spatial positions, standard convolutions see across patch borders and learn seamless transitions. Unlike Patch Diffusion (Wang et al., NeurIPS 2023), which uses patches for training efficiency on fixed images, this is fully autoregressive over a potentially unbounded grid.

RePaint conditioning

At every denoising step, the known regions (TL, TR, BL) are re-noised to their correct noise level and re-injected, providing persistent spatial context throughout the entire reverse process rather than injecting it once and hoping it survives 1,000 denoising steps. This is the key mechanism that keeps neighboring patches “visible” to the model at every step. From RePaint (Lugmayr et al., CVPR 2022).

Global context via vision backbones

A frozen vision backbone (CLIP or DINOv2) encodes a broader view of the scene and injects it into every UNet block via cross-attention. The backbone can be driven in two ways: (1) autoregressive — re-encode the partial canvas at each generation step; or (2) seed image — a fixed embedding from a reference image steers the entire generation. The cross-attention output is gated by a learned tanh scalar (initialized at 0.5, not zero; zero-init caused a starvation equilibrium where CLIP never received gradients). Classifier-free guidance modulates backbone influence at inference.

Training

The model is a UNet (177M parameters, base channels 96, self-attention at 16×16 and deeper) operating on 64×64 patches in pixel space; no VAE, no latent space. With a step size of 32 and overlapping extraction, even 3,000 COCO images yield a large number of training patches. 1,000 diffusion timesteps with a linear beta schedule. Multi-GPU training via accelerate.

Results

Patch completion

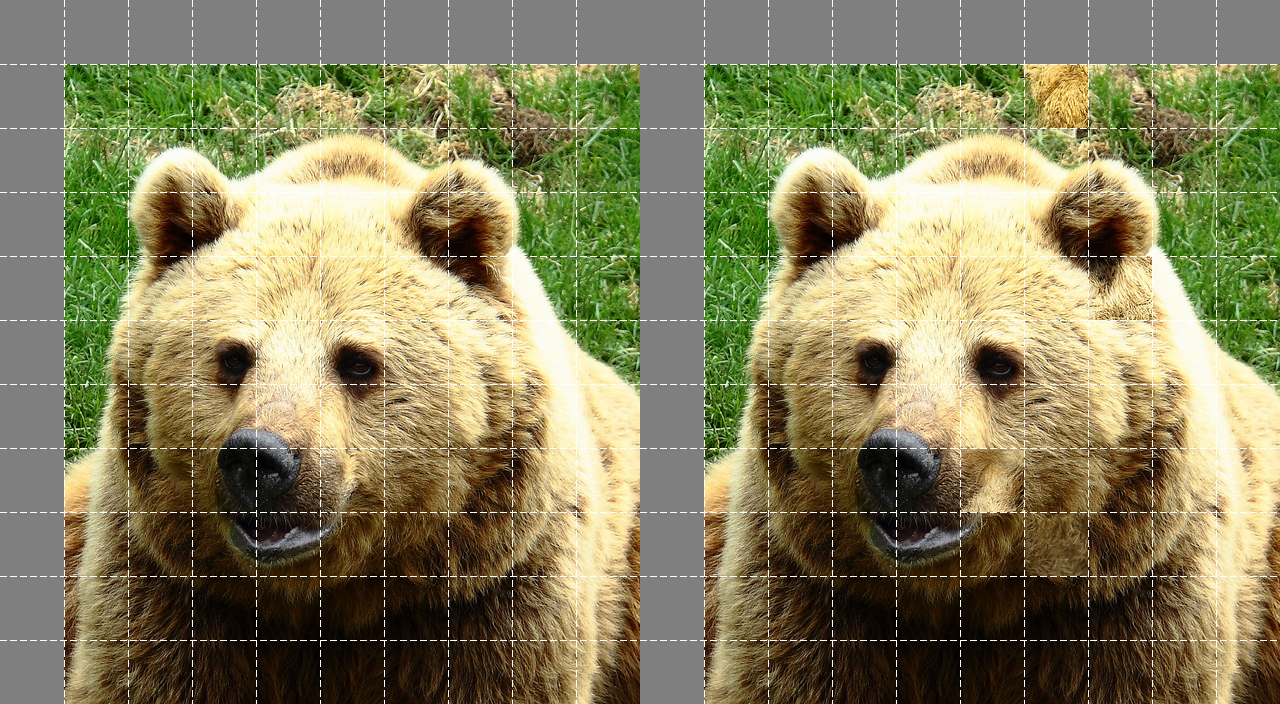

During validation, the model receives three ground-truth context patches plus a CLIP embedding and generates the missing bottom-right patch. In each pair below, the left image is the original and the right has 4 patches replaced by model output.

Patches are locally convincing: edge continuity, texture, and color match their neighbors even on unseen images. The challenge is chaining them together; each patch is plausible in isolation, but global structure does not emerge.

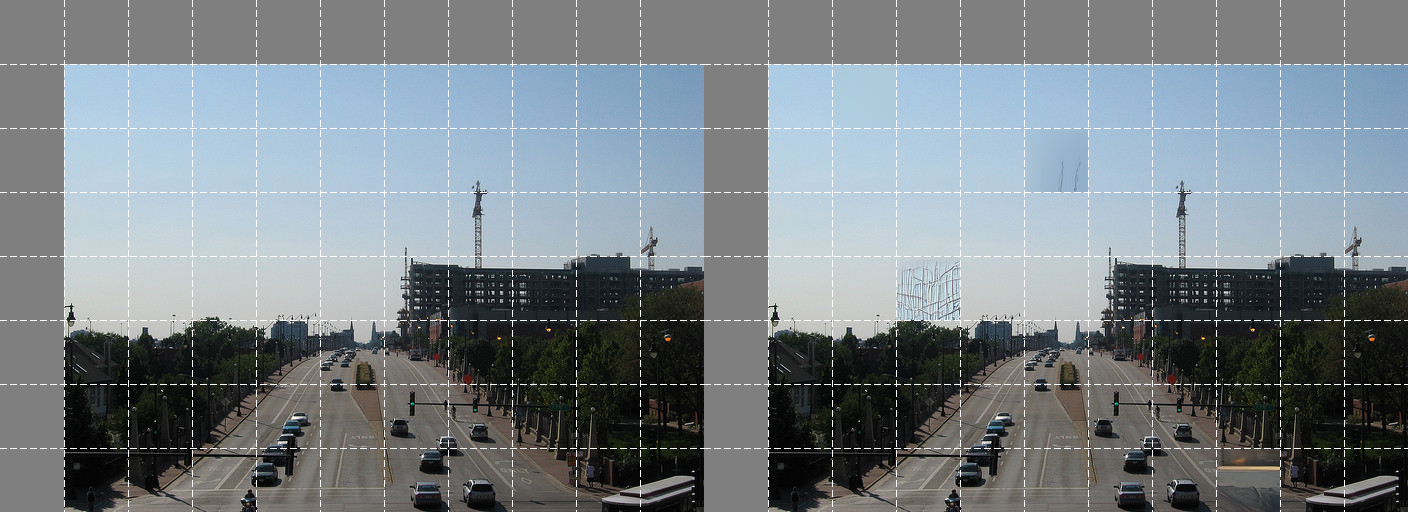

Unbounded generation

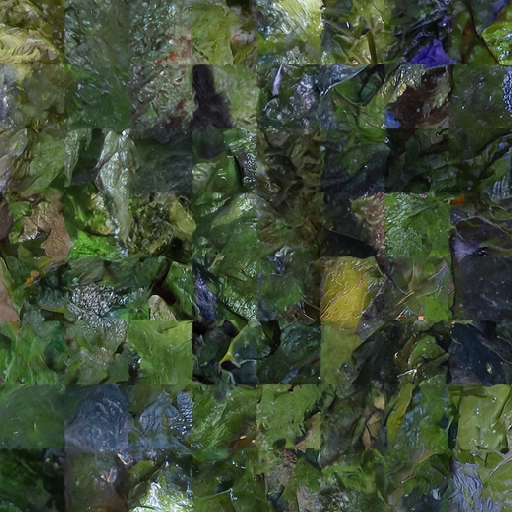

Full autoregressive raster-scan: every patch generated sequentially, each conditioned on previously generated neighbors. Vibrant textures emerge, but no recognizable objects spanning multiple patches.

Color and texture transfer

When conditioned on a seed image, the backbone successfully transfers color palette and texture to the generated output.

Position-dependent statistics

With the DINOv2 backbone, sky-blue tones consistently appear near the top of umbrella-seeded generations across different random seeds — matching the spatial layout of the original photograph.

Ablation: systematic experiment summary

The previous version had no global conditioning at all. In this version, I systematically tested whether vision backbones and positional encoding could give the model a sense of broader scene structure. Five configurations, all trained for 475+ epochs on the same data:

| Config | Backbone | Data | Pos. Enc. |

|---|---|---|---|

clip_10k | CLIP ViT-B/32 (frozen) | 10K | Yes |

dino_3k | DINOv2 ViT-B/14 (frozen) | 3K | Yes |

dino_10k | DINOv2 ViT-B/14 (frozen) | 10K | Yes |

no_backbone_3k | None | 3K | Yes |

no_backbone_no_position_encoding_3k | None | 3K | No |

Position encoding alone does nothing. no_backbone_3k and no_backbone_no_position_encoding_3k produce identical results; without a global signal, positional encoding has no effect.

Backbone injection provides context but not structure. CLIP and DINOv2 successfully transfer color palette, texture, and position-dependent statistics, but coherent objects never emerge across patch boundaries.

More data doesn’t help. dino_3k and dino_10k show no difference — the bottleneck is architectural, not data-limited.

The backbone embeddings encode high-level semantics (scene type, dominant color) but not the spatial layout information needed to coordinate structure across patch boundaries. The cross-attention gates learn to use backbone features (gate values settle around 0.3–0.5), but the features themselves lack the spatial resolution to guide local patch decisions.

What didn’t work

CLIP text conditioning

CLIP’s text encoder can drive the cross-attention pathway instead of an image encoder. In theory, a text prompt like “blue” or “red” should steer the output. In practice, the model ignores text embeddings entirely — “blue”, “red”, and “cat” produce nearly identical outputs.

The cross-attention pathway learns from image embeddings during training. CLIP text embeddings occupy a different region of the shared embedding space — close enough in CLIP’s contrastive sense, but too far out-of-distribution for the learned cross-attention gates to act on.

Open questions

1. Why are patch boundaries still visible?

The trio context gives each patch three immediate neighbors, and RePaint conditioning re-injects them at every denoising step. Yet seam artifacts persist. Possible directions:

- Overlapping patches with blending — generate patches with overlap and blend the overlapping regions.

- Boundary continuity loss — an explicit loss on patch edges (as attempted in the previous version), possibly with stronger formulations (adversarial boundary discriminator, perceptual loss at edges).

2. Why don’t coherent objects emerge across multiple patches?

The model generates plausible textures and learns position-dependent color statistics, but never forms recognizable structures that span more than one patch. Possible directions:

- Hierarchical latent planning — generate a coarse low-resolution layout first, then condition each patch on its corresponding region.

- Multi-scale context windows — feed the model a downsampled view of the full canvas generated so far, in addition to the three high-resolution neighbor patches.